The Agentic Economy Demands Verifiability: The Billion-Dollar Case for Trusted Compute

AI agents are already generating billions, but their biggest problem is not growth. It is trust. As agents make higher-stakes decisions, verifiable compute becomes the missing layer between autonomy and accountability.

Everyone is talking about AI agents. Almost no one is naming them.

Open any tech newsletter in April 2026 and you'll see the same lazy framing: "Salesforce is doing AI agents." "Microsoft is doing AI agents.", "Google is doing AI agents." As if these companies woke up one morning and decided to sprinkle some agent dust on their earnings reports.

That framing is useless. It hides the real story. And worse it conflates three fundamentally different things: platforms that let you build agents (Agentforce, Glean Agents), assistants that help humans do things (Copilot, Now Assist), and actual autonomous agents that make decisions and take actions without a human in the loop. The first two are important. Only the third category is what this article is about

The real story is that specific, individual AI agents - with names you can say out loud, pricing models you can audit, and ARR numbers you can verify - are generating revenue at a pace that makes the entire SaaS era look slow. A legal AI agent is drafting settlement language on $50 million cases. Customer service agents are resolving a billion interactions a year without a human touching the ticket. Autonomous systems are making decisions where a wrong call means lawsuits, lost customers, or a $2 million pharmaceutical shipment arriving spoiled. Yes, a coding agent crossed $2 billion in ARR faster than any B2B product in history. But code can be reverted. A botched legal filing cannot.

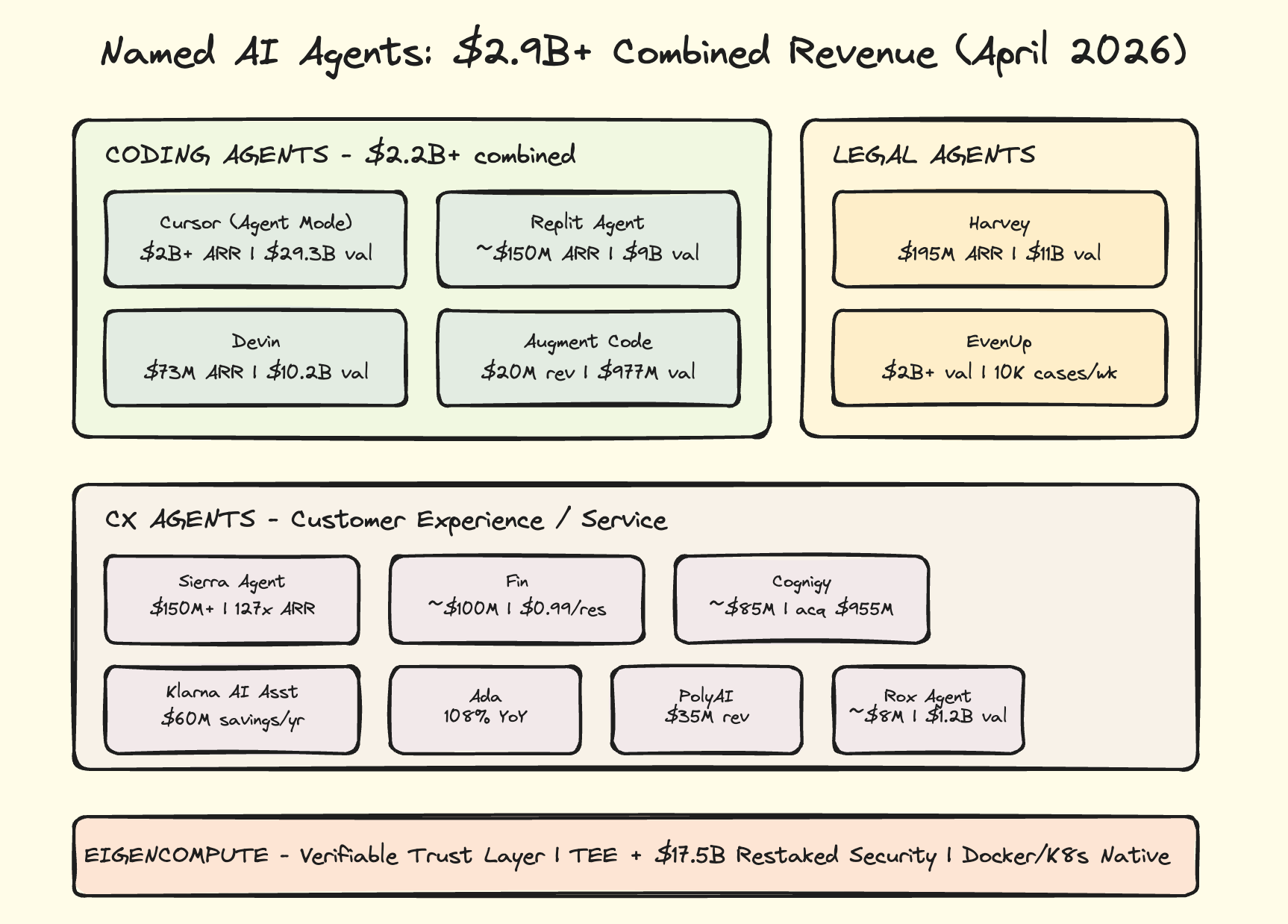

And there is a second story underneath the first one, which almost nobody is telling at all: these agents are making decisions worth millions of dollars, and there is no way to verify they made those decisions correctly.

That gap - between what agents are doing and what anyone can prove they did - is the most important unsolved problem in AI. It is also where EigenCompute enters this story. But we'll get there. First, the money.

The AI agents market hit $7.63 billion in 2025 according to Grand View Research, with projections pointing to $182.97 billion by 2033 at a 49.6% CAGR. The autonomous agents sub-market alone is headed to $253.3 billion by 2034 per Dimension Market Research. Total VC investment in AI hit $340 billion in H1 2026 alone per Silicon Valley Bank.

But those are projections. Here are the agents actually collecting the checks.

The $100M-to-$2B Pipeline Nobody Expected

Cursor is the single fastest-growing B2B SaaS product in recorded history.

It crossed $2 billion in annualized revenue in February 2026 according to Bloomberg, doubling in three months from the $1 billion ARR milestone hit during Anysphere's November 2025 Series D raise of $2.3 billion. Sacra's estimates track the trajectory: $100M in early 2025, $500M by June, $1B by November, $2B by March 2026. That is $100M to $2B in 14 months.

The pricing is deceptively simple $20/month for Pro, $40/month for Business but the volume is staggering. With the April 2026 launch of Cursor 3, the product shifted from a code editor to an agent management console. The IDE became the fallback, not the primary interface. Cursor now orchestrates entire coding workflows: it reads requirements, writes code, runs tests, fixes failures, and ships to production. When Cursor 3 launched agent mode as the default, usage per seat jumped 3x. Users are paying for an agent, not an editor.

Anysphere's valuation hit $29.3 billion. The company generates roughly $13.7 million in ARR per employee. For context, Salesforce generates about $290K per employee. Cursor's revenue efficiency is 47x that of the largest enterprise software company on earth.

But Cursor is not alone in the coding vertical. Replit Agent which builds entire applications from natural language descriptions, not just writing code but making architectural decisions, choosing frameworks, designing database schemas, handling deployment configuration, and resolving build errors autonomously hit $100M ARR in mid-2025 and scaled to approximately $150M by September 2025. In March 2026 Replit raised $400 million at a $9 billion valuation and announced a trajectory toward $1B ARR by end of year. Their effort-based pricing model charging based on the complexity of what the agent builds, not time spent is the purest expression of agent economics yet. You pay for the output, not the process.

And then there is Devin, Cognition's autonomous software engineering agent, which went from $1M ARR in September 2024 to $73M ARR by June 2025 a 73x revenue increase in nine months. Cognition was valued at $10.2 billion. Devin's pricing evolution tells its own story about where this market is going: it launched at $500/month, positioning as a premium high-autonomy agent for engineering teams. Then in early 2026 the price dropped to $20/month, matching Cursor's Pro tier. The technology commoditizes fast. The value of verified, trustworthy execution does not.

Augment Code adds a fourth data point: $20M in revenue by October 2025, over 100K developers, Fortune 500 customers, $227M Series B at a $977M valuation. Unlike code completion tools, Augment's agents make independent architectural and implementation decisions based on deep contextual understanding of the full codebase.

Cursor’s annual recurring revenue topped $2 billion in February, according to a source, a figure that underscores the fast growth of the artificial intelligence coding assistant https://t.co/2erg8R6IN6

— Bloomberg (@business) March 2, 2026

Four autonomous coding agents. Combined ARR north of $2.2 billion. Growing at rates between 73x and 20x year-over-year. This is the vertical where agent economics hit first and hardest and it happened because coding is a domain where the output is immediately testable. Bad code can be reverted. Tests can be rerun. The feedback loop is tight.

The harder question is what happens when agents operate in domains where mistakes can't be easily reversed.

Where Agent Mistakes Actually Cost Money ?

Harvey is the legal AI agent that tripled revenue in 2025, going from $50M ARR at end of 2024 to $195M ARR by end of 2025. It crossed $100M in August 2025. In March 2026 Harvey raised $200 million at an $11 billion valuation per The Economic Times a 56x ARR multiple that reflects something deeper than just growth.

Harvey is not a legal document search tool. It is an autonomous legal agent that manages entire workflows: contract analysis, due diligence, regulatory compliance, litigation research, and document drafting. CEO Gabriel Pereyra has built Harvey with a personalized memory system that learns from each law firm's specific practices, precedents, and preferences. With over 1,000 enterprise customers primarily AmLaw 100 firms and Fortune 500 legal departments the growth rate (3.9x year-over-year) at this revenue scale is nearly unprecedented in enterprise SaaS.

The memory system is the key insight. The longer a law firm uses Harvey, the more Harvey learns about that firm's specific practices and preferences making it harder to switch and more valuable every month. This is the "memory moat": an economic flywheel where agent usage creates agent stickiness creates more agent usage. It's the same dynamic that made enterprise SaaS sticky, but compressed from years to months

EvenUp is doing something similar in personal injury law autonomous agents that draft documents, review cases, manage communications, and handle administrative tasks across the full case lifecycle. They process 10,000+ cases per week, have resolved 200,000+ cases securing over $10B in damages, and raised a $150M Series E at a $2B+ valuation in October 2025. The agent doesn't assist lawyers. It autonomously handles the routine case management that previously required paralegals and junior associates.

Now consider the economics. A junior associate at an AmLaw 100 firm bills $400-600/hour. Harvey handles the same contract analysis or due diligence workflow for a fraction of the cost. EvenUp processes 10,000 cases a week that would have required dozens of paralegals. The cost compression in legal is even more extreme than in coding and the stakes of getting it wrong are orders of magnitude higher. When Harvey's agent drafts settlement language and the model hallucinates a precedent, a $50 million case can collapse.

The same dynamic plays out in customer experience, where the cost differentials are even more stark.

The CX Agents Are Not Chatbots

We’ve just announced Monitors in Fin.

— Intercom (@intercom) March 25, 2026

Giving you complete control over your conversation quality, Monitors continuously watch and evaluate quality against your standards across both @Fin_ai and human conversations. So you have confidence that every conversation is handled the… pic.twitter.com/N0dTHSWV1T

There is a critical distinction that most AI coverage misses: the difference between a chatbot that surfaces FAQ answers and an autonomous agent that resolves customer issues end-to-end processing refunds, updating orders, escalating to specialists, and closing tickets without human intervention. The agents below are the latter.

Fin is Intercom's autonomous customer service agent. Intercom reported $400 million in total recurring revenue in March 2026, with Fin as a standalone product approaching the $100 million milestone per the Business Post. Fin's pricing model is the clearest example of per-outcome agent economics in the market: $0.99 per resolution. Not per message. Not per conversation. Per resolution. If Fin resolves a customer's issue refund processed, question answered, bug triaged that's $0.99. If it doesn't resolve it, Intercom doesn't charge. A human agent handling tier-1 support costs $15-$25 per resolution when you factor in salary, benefits, training, and overhead. Fin costs $0.99. That is a 15-25x cost reduction per resolved ticket. That kind of differential doesn't create a product, it creates an economic inevitability.

Sierra Agent entered year three with over $150M in ARR per its February 2026 blog post. Founded by former Salesforce co-CEO Bret Taylor and ex-Google AI chief Clay Bavor, Sierra reached $100M ARR in just seven quarters a pace matched by only a handful of SaaS companies in history. After a $175M raise in late 2024 and $350M more in 2025, Sierra's $10 billion valuation reflects a 127x ARR multiple the highest of any AI agent company. That premium exists because Sierra's agents operate as full customer experience layers for brands like WeightWatchers, Sonos, SiriusXM, and ADT, handling customer interactions end-to-end: troubleshooting technical issues, processing transactions, managing subscriptions, handling returns. They don't just deflect support tickets. They replace entire customer experience departments.

Cognigy hit approximately $85M in annualized revenue by mid-2025, growing at roughly 80% year-over-year, processing over one billion annual interactions globally across 1,000+ enterprise brands. In July 2025, NICE Ltd. announced its acquisition of Cognigy for approximately $955 million a clear signal that autonomous CX agents had hit the point where incumbents will pay nearly $1 billion to avoid being disrupted by them. Cognigy's agents handle complex multi-turn voice and chat interactions with humanlike reasoning: maintaining real-time memory across conversations, using integrated tools to process payments and update accounts, making autonomous resolution decisions across 100+ languages without human escalation.

PolyAI adds another dimension autonomous voice AI agents handling complex customer service calls end-to-end across 45+ languages in 2,000+ live production deployments. The company hit $35M in revenue in 2025 with U.S. sales expected to triple, and raised $86M at a $750M valuation in December 2025. These agents manage multi-turn voice conversations from password resets to complex billing disputes, making autonomous decisions without human intervention.

Klarna AI Assistant rounds out the CX picture not as a revenue-generating product but as the single most financially impactful internal AI agent deployment in the world. It replaced 700 full-time customer service equivalents within Klarna's operations, generates approximately $60 million in annual cost savings, handles customer inquiries across 35 markets and 23 languages, resolved 2.3 million conversations in its first month of full deployment, and cut average resolution time from 11 minutes to under 2 minutes. Klarna used this as a key narrative in its IPO process: proof that a single AI agent deployment can generate more financial value than many funded AI startups.

And Ada the autonomous customer service agent that resolves 70%+ of inquiries without human escalation doubled its agentic AI ARR year-over-year (108% growth) by end of 2025, with 550+ agents deployed handling 6.4 billion+ interactions across telecom, fintech, and e-commerce.

Rox Agent, valued at $1.2B with projected ~$8M ARR by end 2025, takes agent economics into sales: it doesn't assist salespeople it executes entire sales workflows autonomously, from researching prospects to personalizing outreach to qualifying leads. The 150x ARR multiple signals massive investor confidence that autonomous sales agents will follow the same adoption curve as CX agents.

Adding It Up And What the Numbers Actually Mean

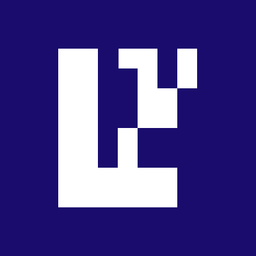

The combined revenue of just the named agents documented in this article Cursor ($2B+), Harvey ($195M), Replit Agent (~$150M), Sierra Agent ($150M+), Fin (~$100M), Cognigy (~$85M), Devin ($73M), Klarna AI Assistant ($60M savings), PolyAI ($35M), Augment Code ($20M), and Rox Agent (~$8M) exceeds $2.9 billion. Every one of these is a real agent making autonomous decisions, not an assistant waiting to be prompted and not a platform for building agents.

The revenue multiples tell you where investors believe the deepest moats and highest autonomy lie. Customer experience agents command the highest multiples Sierra Agent at 127x ARR on a $10B valuation. The logic: CX agents replace entire departments, not individual tasks. Legal agents are close behind Harvey at 56x ARR on $11B, because legal work is high-value, high-complexity, and deeply moated once the agent learns a firm's practices. Coding agents show a wide spread Replit Agent at roughly 60x ($9B on $150M), Cursor at roughly 15x ($29.3B on $2B). The divergence says that Cursor's lower multiple reflects scale maturity and commoditization risk, while Replit's higher multiple reflects growth trajectory. Voice and CX agents in acquisition mode Cognigy at roughly 11x ($955M on ~$85M) carry an acquisition discount, not a value discount.

Amjad Masad’s Replit allows users to build apps together like they’re doodling on a white board. It also made the Jordanian immigrant a billionaire along the way.

— Forbes (@Forbes) April 5, 2026

Read more about how this AI company is reimagining vibe coding: https://t.co/MyfNumfMrF

📸: Cody Pickens for Forbes pic.twitter.com/r3cpikBG5w

The average AI agent company trades at 52x ARR per AI Funding Tracker data in 2026. Total VC investment in AI hit $340 billion in H1 2026 alone per Silicon Valley Bank. The best exit environment since 2021 is heavily concentrated in AI mega-deals.

These are not speculative companies with theoretical revenue models. These are agents generating billions in measurable revenue, with pricing models you can verify, growing at rates that break every SaaS benchmark ever recorded. The market is not asking "will AI agents make money?" anymore. They are making money.

The market is now asking a different question, the one this entire article has been building toward.

So legal and CX agents are handling high-stakes, high-volume decisions where a wrong call has real consequences. But the single largest revenue concentration in AI agents isn't in either of those categories. It's in coding

The Domain Where None of This Works Without Proof

Everything above Cursor writing code, Fin resolving tickets, Harvey drafting contracts, Cognigy handling a billion voice interactions operates in digital space. The stakes are real but contained. A bad code commit can be reverted. A wrong customer service response can be corrected. A flawed legal draft gets caught in review.

But there is one domain where AI agents are making autonomous decisions that cannot be undone, where a wrong call means a $2 million pharmaceutical shipment arrives spoiled, a container ship docks at the wrong port, or a cold chain breaks and lives are at risk.

The AI in logistics market hit $17.96 billion in 2024 and is projected to reach $707.75 billion by 2034 at a 44.4% CAGR. The agentic AI segment specific to supply chain was $8.67 billion in 2025. And this is where the thesis shifts from revenue to trust.

AI agents are not just tools used by shipping companies. AI agents ARE shipping companies. When an AI agent receives a shipment order, it autonomously selects carriers based on real-time pricing and reliability data, generates routing options accounting for weather and traffic, negotiates spot rates through carrier APIs, monitors shipment progress, proactively reroutes around disruptions, generates customs documentation, and handles exception management. It operates 24/7, processes thousands of decisions per hour, and improves with every shipment. That is not an assistant. That is a logistics operator.

Real deployments are cutting costs by 15-30%, improving forecast accuracy by 75%, and reducing emergency expedites by millions of dollars per year per MindStudio's 2026 logistics guide. By 2026, 57% of executives expect agentic AI will make proactive recommendations, and 62% expect AI agents will make autonomous decisions in supply chain operations per IBM research

AI agents are set to modernize the trillions of dollars of traditional software industry for the age of AI.

— Rohan Paul (@rohanpaul_ai) February 2, 2026

Here, Jensen Huang explains that and why hundreds of billions of dollars of VC money is getting invested in AI now 🎯

video from CES 2026 https://t.co/XCyXh5VaH0 pic.twitter.com/396yglX1HA

No standalone logistics AI agent product has broken out the way Fin or Devin have in their categories yet. FedEx invested over $2 billion in technology modernization, with its Surround platform using sensor data and AI to make millions of package routing decisions daily. Maersk runs AI-driven autonomous systems across its 700+ vessel fleet. DHL Supply Chain uses AI systems for predictive inventory management and dynamic route optimization. Flexport automates freight forwarding with AI. But these are internal systems, not named agent products you can buy.

The opportunity is massive and unfilled: no one has built the "Fin for logistics." The company that does will be operating in a $708 billion addressable market by 2034.

And this is where the entire article comes together. Because when Fin resolves a customer service ticket wrong, the customer gets a bad answer and maybe a $10 refund. When an autonomous logistics agent reroutes a $2 million pharmaceutical cold-chain shipment and the temperature breaks, people can die. When Harvey's agent drafts settlement language and the model hallucinates a precedent, a $50 million case collapses.

The agents in this article are generating $2.9 billion in combined revenue. They're making real, consequential decisions every second of every day. And the trust model for all of it is: hope. Hope that the model did what it said it did. Hope that the inputs were what they claimed. Hope that no one tampered with the execution.

That is not good enough. Not for $500,000 freight contracts. Not for $50 million legal settlements. Not for production code deployed to millions. This is the gap. And this is where the story arrives at its most important chapter.

Verifiability is the missing layer

Every named agent above runs on compute infrastructure that operates on faith. You trust that Cursor's model produced the code it says it produced. You trust that Fin resolved the ticket it billed you $0.99 for. You trust that Harvey's contract analysis used the model version and inputs it claims.

For $20/month coding assistance, faith is fine. For a $500,000 autonomous freight routing decision or a legal document that determines a $50 million settlement, faith is not enough. You need proof.

The standard mitigations each have serious limitations when applied to general-purpose AI agent compute. ZK proofs are computationally feasible for arithmetic circuits but remain impractical for large neural network inference proving a single forward pass of a 70B parameter model in ZK would require orders of magnitude more compute than the inference itself. zkML is progressing, but production-grade ZK verification for frontier models is years away. Optimistic fraud proofs require a verifier to re-execute the computation, but for non-deterministic workloads which is most GPU inference due to floating-point operation ordering, kernel race conditions, and opportunistic memory reuse re-execution doesn't produce bitwise-identical outputs, making fraud detection ambiguous. Reputation systems are game-theoretically weak: a node can build reputation honestly, then exploit it for a single high-value computation.

None of these solve the core problem: how do you get cryptographic certainty about what happened inside a compute environment, without re-executing the entire workload?

EigenCompute: The Architectural Answer

EigenCompute doesn't try to bolt verification onto a GPU marketplace. It starts from the verification requirement and builds compute around it. The approach is hardware-isolated execution via Trusted Execution Environments, with a developer experience that maps directly to cloud primitives.

EigenCompute is a verifiable offchain compute service built by EigenCloud that enables developers to run complex, long-running agent logic outside of a smart contract. It supports Docker and Kubernetes containers meaning any AI agent codebase can be deployed on EigenCompute without rewriting. Every execution runs inside a Trusted Execution Environment hardware-level isolation that ensures even the cloud operator cannot see or tamper with what's running.

The core mechanism: when you deploy to EigenCompute, your application packaged as a standard Docker image is loaded into the enclave. The TDX hardware generates a cryptographic attestation: a signed hash of the exact Docker image running inside. The machine operator cannot forge this attestation without breaking Intel's hardware security model. Every deployment is permanently recorded onchain by its Docker digest, creating an immutable audit trail.

This is not a wrapper around existing cloud. It's a fundamentally different trust model: hardware attests to what code is running, and that attestation is anchored onchain. The platform is secured by $17.5 billion in restaked assets if an operator produces a fraudulent attestation, they face automatic economic penalties through slashing. This is not reputational risk. It is direct financial loss.

Each application receives its own cryptographic identity and dedicated wallet. Only that specific app, running the verified Docker image inside the enclave, can retrieve the private key. Containers aren't just compute jobs they become economic actors.

What This Means for Each Agent Category

Consider what this enables for the agents documented above.

When Cursor or Devin or Replit Agent autonomously deploys production code, EigenCompute can verify that a specific model version with specific inputs produced the exact output deployed. This creates an auditable chain of custody from intent to deployment critical as autonomous coding agents ship code without human review.

When Harvey's agent drafts a $50M settlement agreement, or EvenUp's agent processes one of its 10,000 weekly cases, the attestation proves exactly how that document was generated which model version, which inputs, which logic. This provides the evidentiary chain that law firms and regulated industries require.

When Fin resolves a ticket for $0.99, or Cognigy handles one of its billion annual voice interactions, or Sierra Agent makes a customer-facing decision worth retaining a customer, EigenCompute verification ensures the decision can be audited and reproduced. This protects both the business and the customer.

And for logistics where autonomous AI decision systems are making thousands of routing, pricing, and carrier selection decisions per hour this is where EigenCompute becomes transformational. When an autonomous system routes a $2 million pharmaceutical shipment through a cold chain across three continents, every decision point needs to be provably correct. Verifiable builds complete the chain: source code to verifiable build to Docker image to TDX attestation to onchain record. Anyone can audit any link.

The Pricing Models Tell You Where Verification Becomes Essential

The $2.9 billion in agent revenue documented above is not generated uniformly. These agents have converged on four distinct pricing models, and each model has different implications for how much trust and therefore how much verification the system requires.

Per-resolution pricing is the most trust-dependent. Fin charges $0.99 per resolution. Cognigy charges per interaction. When revenue is directly tied to agent output and the customer pays only when the agent delivers a result the economic incentive to verify that result is maximized. The 15-25x cost reduction per ticket only works if the resolutions are actually correct.

SaaS subscription with agent capabilities is the familiar model. Cursor at $20-$40/month, Replit Agent with effort-based pricing, Harvey and Sierra Agent at enterprise rates. The difference from traditional SaaS: the value delivered per seat is an order of magnitude higher because the agent does the work, not the user. Cursor at $20/month delivers work that previously required a $150,000/year junior developer. Harvey delivers legal analysis that previously required $500/hour associates. Replit Agent builds entire applications that previously required a full-stack development team. The value-to-price ratio is 10-100x, which explains the explosive adoption and also explains why verified execution matters. The more value an agent delivers autonomously, the more you need to trust its output.

Compute consumption pricing Devin's Agent Compute Units model ties cost to compute resources consumed during autonomous work. This model aligns naturally with EigenCompute's infrastructure: as agents consume verifiable compute, pricing can be tied directly to attested compute units, creating a transparent, auditable billing system.

And internal cost savings Klarna's $60M/year model doesn't generate external revenue but creates massive enterprise value. Every Fortune 500 company is running this calculation right now: what's the cost of our customer service operations versus the cost of deploying an AI agent?

Where This Goes: The Agent-to-Agent Economy

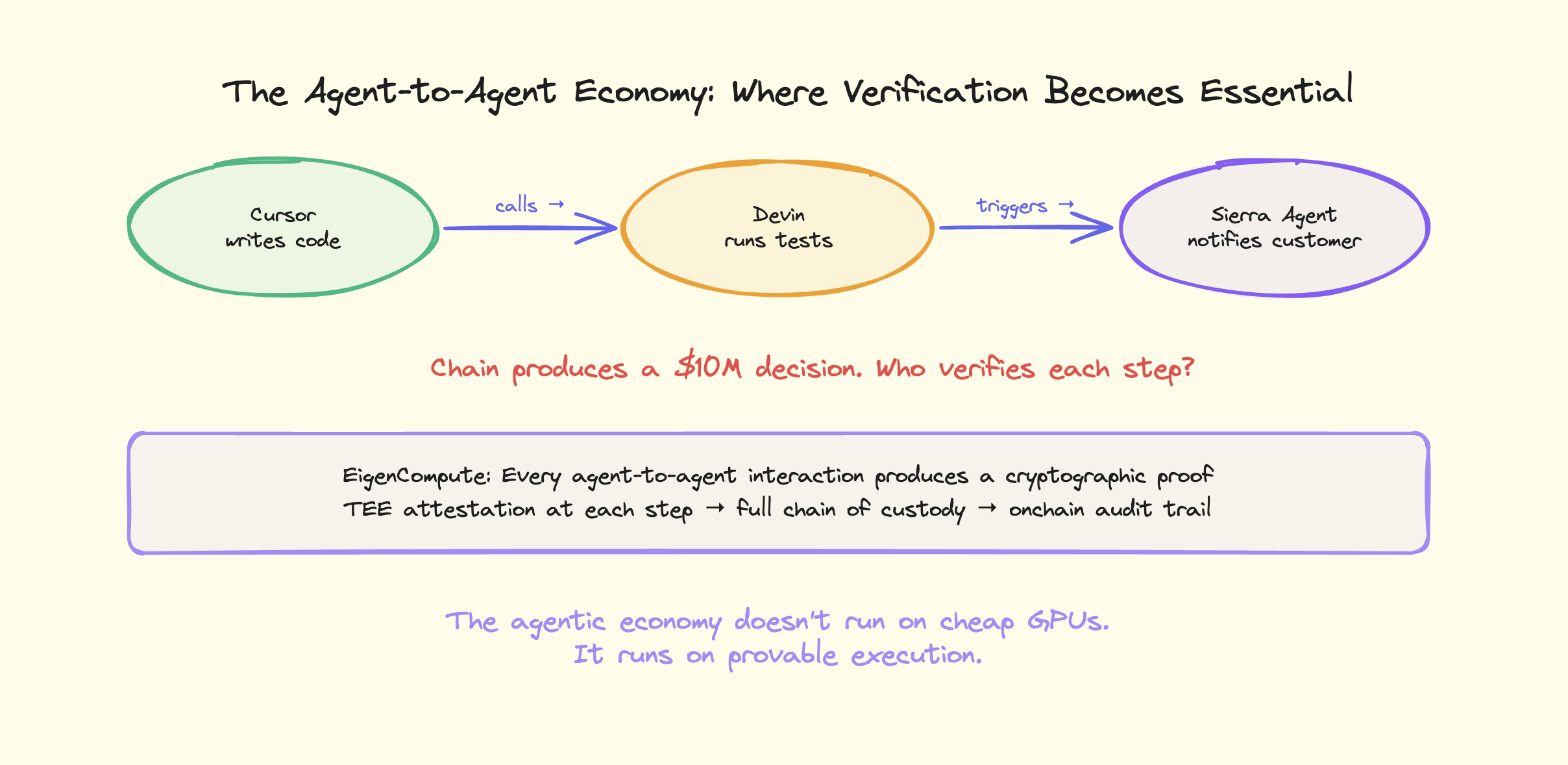

The next phase is not humans using agents. It is agents using agents. Cursor calls Devin for specialized tasks. Sierra Agent triggers logistics agents for order fulfillment. Harvey's legal agent coordinates with EvenUp's case management agents across jurisdictions.

This agent-to-agent economy requires infrastructure that doesn't exist in traditional cloud. When Agent A calls Agent B, which calls Agent C, and the chain produces a $10 million decision, you need verifiable compute at every step. EigenCompute's TEE-attested execution model is built for exactly this: every agent-to-agent interaction produces a cryptographic proof that can be independently verified.

Sreeram Kannan laid out the framing at Digital Asset Summit: AI makes agents intelligent, crypto makes them investable. Together they create the possibility of a new kind of firm software-native, asset-owning, investable, and global from inception. If Replit Agent can build entire applications from a sentence, and Cursor can hit $2B ARR by autonomously writing code, what happens when these agents operate entire companies? With 10 employees? With 1? With zero?

The autonomous company an entity operated entirely by AI agents, with humans serving as board members or regulators rather than operators is not science fiction. It is the logical endpoint of the trends documented in this article. Every named agent here is getting more autonomous, not less. Cursor ships code without review. Replit Agent builds entire production applications from a single prompt. Logistics agents route freight without human involvement.

EigenCompute's verifiable compute is the trust layer that makes autonomous companies possible. When there's no human in the loop, cryptographic verification replaces human judgment as the accountability mechanism.

EigenCloud's April 2026 blog post articulated this: AI agents combined with verifiable compute and crypto ownership structures will create a trillion-dollar asset class. If an agent can autonomously operate a logistics company generating revenue, managing operations, making decisions 24/7 without human intervention then the agent itself becomes an economic entity. It needs a way to own assets, collect payments, and be held accountable for its decisions. EigenCompute provides the accountability layer. The verifiable compute proves every decision. The TEE isolation protects sensitive data. The restaked economic security enforces honesty through financial penalties.

Whether you buy the trillion-dollar framing depends on a lot of variables. But the technical foundation verifiable execution plus app wallets plus attestation creates a genuinely new design space for autonomous software that holds and deploys capital. That part is not speculative. It's deployed.

The Question This Article Exists to Ask

Grand View Research's $182.97 billion projection for 2033 assumes the current growth trajectory continues. Given that the agents listed here are already at $2.9B+ combined ARR and growing at 40-400% annually, that projection may be conservative.

But the market size is not the interesting question anymore. We are past "will AI agents make money." They are making money. $2.9 billion in combined ARR from agents you can name, with pricing models you can verify, growing at rates that break every SaaS benchmark ever recorded.

The interesting question is the one this entire article has been building toward: as these agents get more autonomous, as the decisions they make get more consequential, as the money at stake goes from millions to billions, who verifies that they did the right thing?

Right now, the answer is: nobody. That is not a technology problem. It is a trust problem. And it is the biggest unsolved problem in AI.

If agents are going to become companies entities that own property, deploy capital, and operate as durable economic actors then verifiable compute isn't a feature. It's the foundation. You can't invest in an entity whose execution you can't audit. You can't trust a firm whose code you can't verify. The agentic economy doesn't run on cheap GPUs. It runs on provable execution. And the platforms that figure this out first will be the ones trusted with real economic agency.

EigenCompute is building that layer. Docker-native. TEE-secured. Backed by $17.5 billion in restaked economic security. Every decision is provable. Every action is auditable. Every agent is accountable.

The AI agent era is not coming. It is here. The agents have names. And the race to make them trustworthy has barely begun.